This may be an unusual post relative to normal questions, but I would really appreciate a thoughtful discussion on the topic.

Situation:

In the US, we currently do not have sufficient PCR testing for COVID19. As a result we have to ration testing until we can scale it to needed availability. We do not know when this will be, but likely weeks. I believe our failures are unique among OECD countries, although certainly it affects other parts of the world with limited testing.

Risk Prediction Models:

Risk prediction models are used frequently in medicine, but they are challenging to create & validate. Good models take time to develop, and bad models can cause more harm than good. Prediction models should therefore be subject to testing and validation prior to implementation for use in health care decision making.

Ethical Dilemma:

Their is a growth of patients in the US with suspected COVID19, but insufficient testing for all of them. As a result, many patients with uncertain pre-test probability of disease remain in limbo as to their disease status, and need for isolation. Compounding this effort is that untested patients who dont isolate may worsen the pandemic.

Ethical Question:

Should we rush an unvalidated risk prediction model aimed for optimal specificity to assist in improving post-test probability of predicting disease for patients who are unable to access testing?

Ethical breakdown:

Rushing a model in an emergent situation, i would like to argue, is not unlike FDA emergency provision for untested drugs.

In this situation, a model aimed for high specificity would in theory assist in identifying all patients likely to have COVID19, and encourage isolation. On the other hand, those with false positives will be able to have testing in days or weeks when available, so the potential harm of un-needed self-isolation is hopefully short lived.

However, a bad model, even geared for high specificity, might lead to false re-assurance if it returns a negative prediction. This may be mitigated by not having “negative” results; instead results can be provided on a range from indeterminate to highly likely to have disease.

Counter points:

This post in part is spurred by many statisticians on twitter (whom i greatly respect) voicing concerns against rushing unvalidated prediction scores. They make excellent points, as linked below.

The risk prediction model:

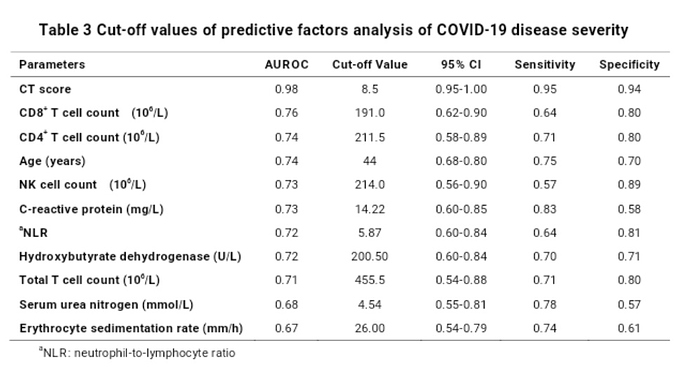

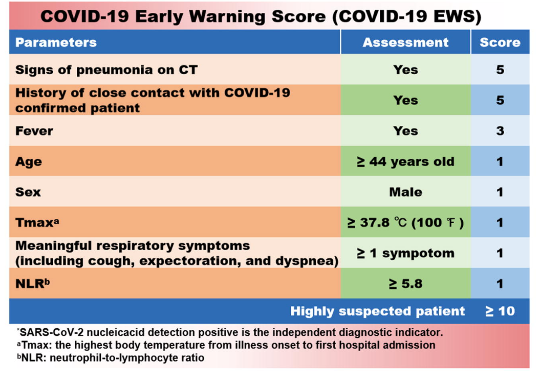

This discussion is actually more than academic. Their is a pre-print for a risk prediction model of COVID19 based on data from China. Should we use these values?

Thanks for any who read this post, thoughts and other opinions would be appreciated. Its rare that an urgent clinical need finds its way here on data methods, so I appreciate peoples time and perspective to ensure we take care of patients in an appropriate and ethical manner.