Now there are many people rethinking the magnitude of therapeutic effects in oncology. One of the proposed tools is the ESMO clinical benefit scale.

One of the controversial aspects of this scale is that the magnitude of benefit is defined by the lower limit of the confidence interval. This is the rationale:

https://esmoopen.bmj.com/content/esmoopen/2/4/e000216.full.pdf

I understand that the authors intend to favour trials designed to evaluate small hypothetical differences that end up being large.

However, using the confidence interval implies depending on both the central estimate and the sample size. For example, I have found people who compare drugs indirectly according to their ranking, which is derived from this lower limit of the confidence interval. Thus, in the same clinical setting, as it is a categorized tool, it may seem that a drug A is more beneficial than a drug B, when in fact simply the sample size of A’s pivotal trial was larger.

I wanted to ask what you think about this definition of clinical benefit.

2 Likes

the simulations are persuasive, but what are they attempting to do? you say “compare drugs indirectly according to their ranking”. But the HR (CI) are from different study populations, in different clinics. etc. It seems too complex for a ‘rule’ to be useful

2 Likes

I agree. In clinical practice I have found that relative measures such as HRs (and their compatibility intervals) are what I mainly need from RCTs. The second important piece I need for patient-centric decision-making is an idea of the patient’s prognosis, e.g., using risk scores usually derived from observational data, although they can be derived from large RCTs as well. The third piece is to know what are the patient’s wishes.

The reason why “absolute benefit” from an RCT is in itself actually relative is because there are different ways to define absolute benefit and, based on which “absolute benefit” we focus on, we can make opposite conclusions and decisions as described very well here. Here is an example: imagine an RCT in oncology with primary outcome of overall survival (OS) that produces a HR = 0.5 favoring the new treatment, and where for the sake of simplicity all typical assumptions hold: proportional hazards with constant event rate over time (exponential distribution of OS). If the population accrued has good prognosis with median OS for the control group of 18 months then the median OS for the new treatment would be 36 months. That is an “absolute benefit” of 18 months which sounds pretty good for the new treatment. But in terms of hazard rates the control group had a hazard rate of Ln(2)/18 = 0.039 whereas the treatment group had a hazard rate of Ln(2)/36=0.019. Which is not that big of a difference in absolute hazards. This makes sense because at any point in time these patients have a low instantaneous hazard of dying. Giving them the new treatment (which may for example be more toxic or more expensive or more logistically demanding) will not change that by much. A patient with that prognosis who for example wants to live long enough to see her daughter graduate college in 3 months may decide that she will not benefit much by the new treatment over the control. Indeed, the 3-month survival probability increases with the new treatment to just 94% compared with 89%, i.e., a 5% increase.

Now if the population accrued has poor prognosis then the decision-making can drastically change. The HR is still 0.5 but if, say, the median OS in the control group is 2 months then the median OS in the new treatment group is 4 months. A mere 2 month-benefit in OS. However, in terms of hazard rates, the patient on the new treatment gets her hazard cut down from 0.35 to 0.17, which is a much higher absolute difference in hazards than in the previous scenario. For a patient with this prognosis who wants to live 3 months to see her daughter graduate, the new treatment should be strongly considered, even if it has unfavorable side-effects or is logistically more challenging. This is because now, the 3-month survival probability increases with the new treatment to 60% compared with 35%.

Notice that all that I needed in the above exercise was the patient’s specific goals, the HR = 0.5 from the RCT, and the patient’s prognosis (not necessarily derived from RCT data). The “minimum

clinically significant AB” in the ESMO-MCBS tool indirectly reflects prognosis for the RCT patients and what the clinicians think would be meaningful for the patient. But I personally prefer to directly use prognosis and ask the patients themselves what they find more meaningful.

3 Likes

I agree with both answers. Snappin’s article is very instructive. But what do you think in particular about the use of the lower limit of the confidence interval, instead of the central estimate?

im not sure it’s unusual, lower limit may be used in drug development, decision making(?), especially toxicology? MTD? i don’t remember exactly

I present you an example with real data from phase III trials for third line therapy in colon cancer.

In this scenario, the ESMO scale gives cetuximab a level 4, TAS102 a level 3, and regorafenib a level 2, where the higher the level the greater the benefit.

https://www.annalsofoncology.org/article/S0923-7534(19)34932-4/pdf

These are the raw data from the original trials:

-

Cetuximab, n=204, HR for OS, 0.55; 95% CI, 0.41-0.74; p<0.001. Median OS: 9.5 vs. 4.8 months.

-

TAS102, n=800 , HR for OS, 0.68; 95%, 0.58-0.81; p<0.001). Median OS: 7.1 vs 5.3 months.

-

Regorafenib, n=760, HR for OS, 0.77; 95% CI 0.64-0.94; p=0.0052. Median OS: 6.4 vs 5 months.

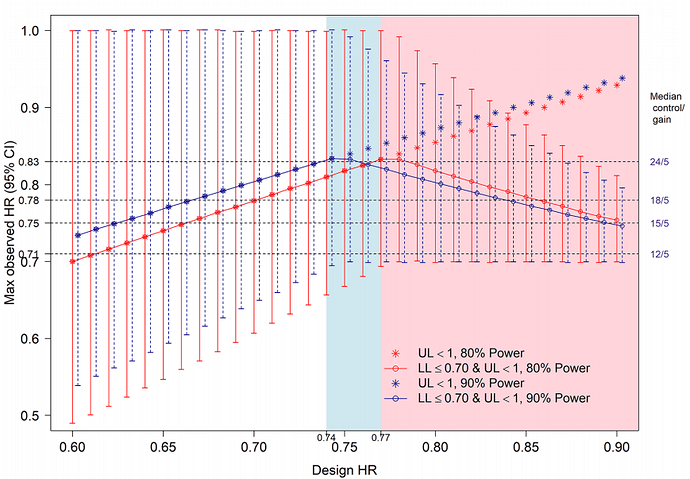

My doubt is that the lower limit criterion for the HR seems to reward small trials, and the criterion of the difference in median OS, which could compensate for this, also does not take into account the uncertainty when the sample size is low. As far as I know, these three drugs have not been compared in head-to-head RCTs, my impression is that they have an equally low magnitude of benefit.

1 Like

it does seem crude and i see the point, the point estimate should get attention. i wonder if network meta analysis applies

I agree with both answers. Snappin’s article is very instructive. But what do you think in particular about the use of the lower limit of the confidence interval, instead of the central estimate?

Good question. On one hand, the point estimate is what the data are most compatible with. On the other hand, measuring uncertainty may be more valuable than the point estimate itself, as @Stephen eloquently argues here. The lower limit of the compatibility interval is a start but it is the width of the interval that gives more information on the precision of the trial. I thus agree with you regarding the importance of sample size and other factors affecting precision. It can of course be reasonably argued that the width of confidence intervals does not formally measure precision, but I think it is a fair assumption to make for our purposes that it does. It seems that the ESMO-MCBS approach assumes that the RCT will have higher power but there are multiple reasons why a trial that was expected to have high power may in the end have low precision.

The other concern is that focusing on the lower limit of a compatibility interval encourages artificial dichotomization. It is even more informative to look at the distribution of the compatibility intervals as argued here and here.

1 Like

just to confirm, in decision analytic theory, the central estimate is used and measures of uncertainty are essentially irrelevant (except in the sense that, if an estimate is so uncertain that you might not even consider the associated strategy as an option and so it would not enter into your decision analysis). Simple example: you need to get to an appointment and you have a data set for three different bus routes. For one of the bus routes, the data are so uncertain that you decide not to consider it. For the comparison of the remaining two, you just chose the route that is most likely to get you there in time, irrespective of the confidence interval around the difference.

2 Likes