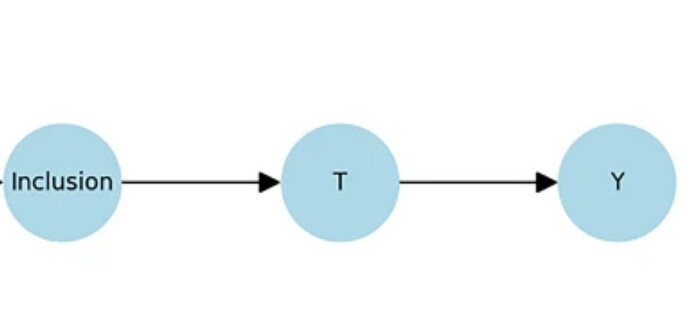

There is rapidly growing interest in integrating a simpler clinician facing of structural causal modeling (SCM) called causal symbolic modeling (cSM) in critical trial design. I am working on a paper which presents cSM as a bridge between the clinician, causal inference, and design theory.

The need for better critical care trials and better explication of cause agnostic RCT is driving this interest. (See link) But the epistemic gaps between 1. design theory, 2. causal inference, and 3. clinical science are substantial.

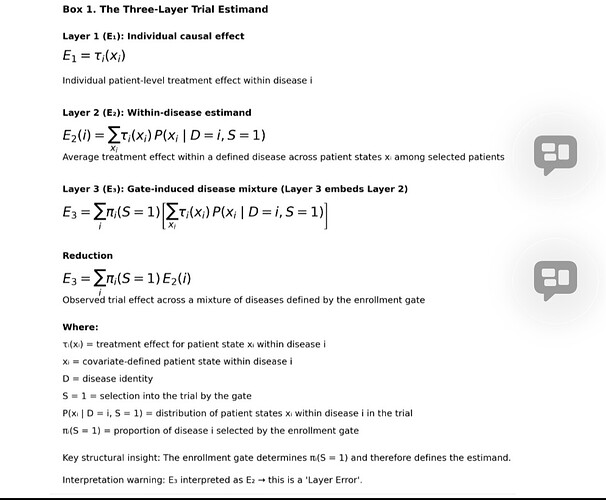

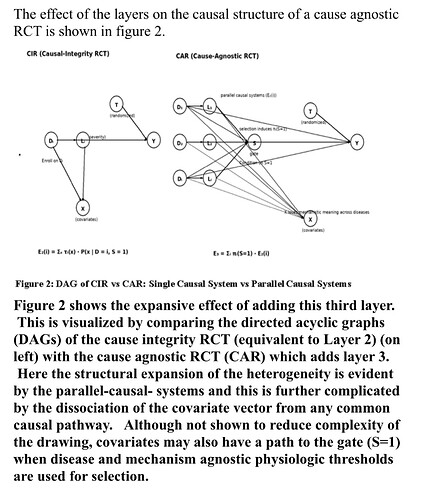

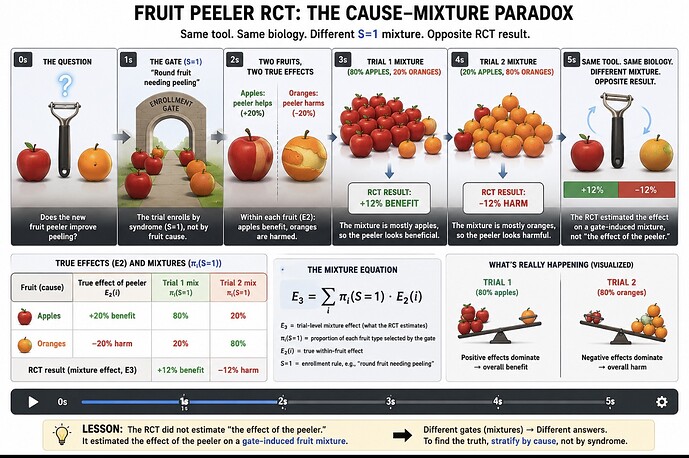

SCM itself can generate so many nodes that it overwhelms and much of this is addressed by randomization . But on the other hand, as proven in critical care syndrome science, randomization cannot solve some hidden pathological design features which are readily detected by cSM.

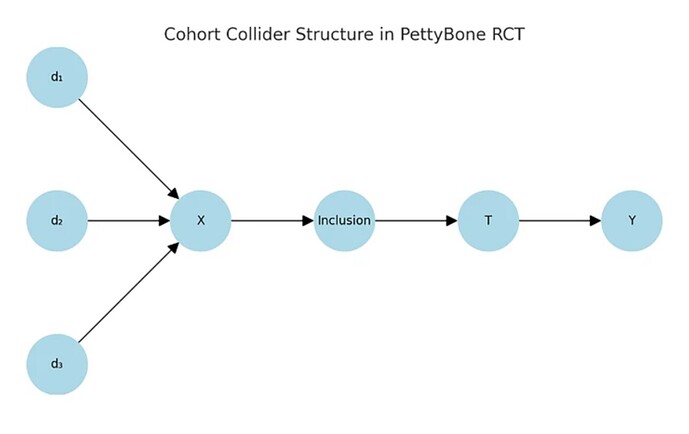

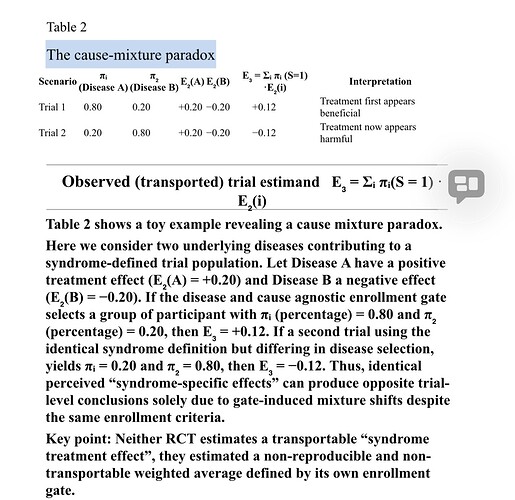

In many trials iatrogenic (design generated) heterogeneity has been introduced before randomization. Treatment assignment is randomized across a mixed causal population, not within a coherent or disease or cause entity.

Without cSM, SCM can still be correctly applied. DAGs can be drawn, estimands identified, RCTs can ge performed, and effects estimated. But if the prior structure (eg the gate & outcome) are invalid, the estimand represents an average over incompatible causal mechanisms. It is mathematically valid yet causally non-transportable.

I hope to have the paper out soon. I would welcome any comments.

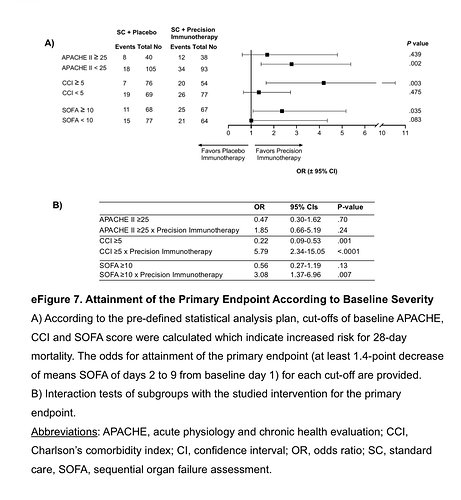

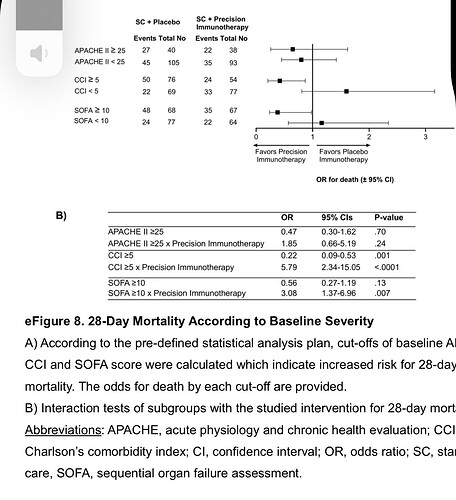

Just to remind of the need, here is a December Petty-Bone sepsis RCT from JAMA. It’s SOFA at the gate plus some thresholds and a “SOFA baseline to multiple endpoint differences” at the outcome.

Remember this was published in JAMA and expected to be the state of the art. But I labored over supplement 2 and I advise all interested in critical care science to do that. It’s data salad.

i liked the move to precision biologic targeting. This is an important gate limitation but otherwise the gate was a wide open triage. It was so wide and survival so low (what killed these people is unknown) that the study is not interpretable. This is sad because they have a target but both the gate and outcome are based on the SOFA composite guessed in 1996.

This type of RCTvneeds to be designed with cSM.

https://jamanetwork.com/journals/jama/fullarticle/2842634

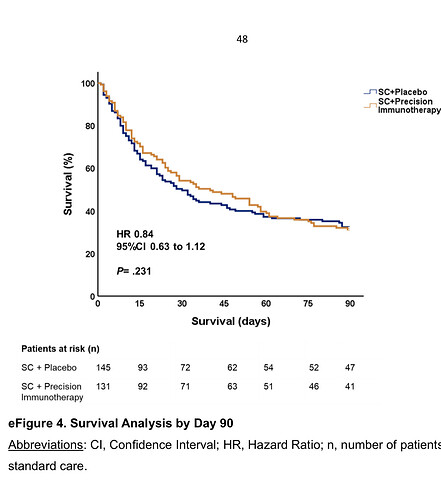

Here is the 90day mortality.

Yet this study was reported as positive based on a 1.7 point difference in SOFA at 9 days but they do not show a daily time series of the SOFA.

Much of this is buried in supplement 2. The point is that these trials are increasingly wasteful. This was a massive multicenter trial in scope and one has to have the highest respect for these ethically motivated workers but the trail lacked causal explication at the gate and outcome and was therefore doomed. Only a massive treatment effect could have bailed that widely gated design out.

Come join this discussion on X or let’s begin a discussion of cSM here.

https://x.com/soboleffspaces/status/2005739600904610040?s=46