I would like to compare several groups to a reference group, with the main idea being to show that the other groups are not inferior to the reference. Ideally, I would also like to test for superiority if not inferior.

The sample size is very small: around 20 participants per group. The study was designed without any sample size calculation, and no non-inferiority margin was pre-specified. The investigators, who had no prior experience, concluded non-inferiority simply because the superiority test p-value was >0.05. I should remake the design as not publishable

The context is a study in medically assisted procreation (MAP), comparing the number of oocytes retrieved across 5 groups (corresponding to 5 phases of the menstrual cycle).

I have several questions:

- Could such a paper be publishable, even though non-inferiority margins were only defined a posteriori?

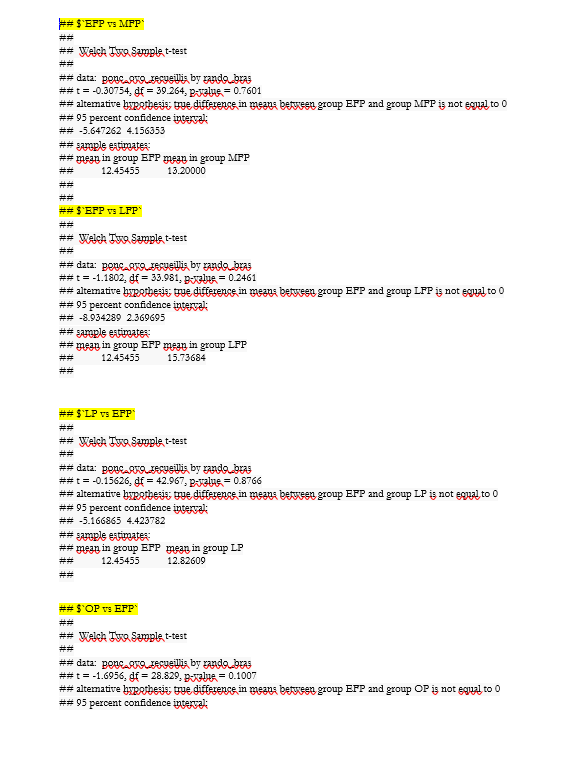

- The working hypothesis is that treatment could begin at any menstrual phase (not necessarily phase 1) without losing efficacy. Therefore, comparisons are only needed versus the first group. Should I run four separate tests? A global test? Should I correct p-values for multiple comparisons? (These should technically be independent tests, right? So no correction required?)

- For curiosity: how should I calculate the sample size needed for such a hypothesis? Should I compute the required N for each comparison independently and then retain the largest?

- From the observed confidence intervals of the difference between group (too wide), it is already clear that the current sample size is insufficient. I am considering a Bayesian analysis, but I only have theoretical knowledge and have never elicited priors in a real study. What would you recommend regarding priors? Should I set 4 priors for the mean differences in the 4 comparisons? Would that also require 4 priors for the standard deviations? Would non-informative priors be more appropriate? What is the best way to elicit priors without being biased by the current data?

- Could such an analysis be implemented in R with JAGS?

The overall idea is to show that the other phases (2, 3, 4, and 5) are not inferior to phase 1, with a non-inferiority margin of 2 oocytes. I also want to test a potential superiority if not inferior (with a delta of 2 oocytes too)

Thank you for you help

Kind regards