Hi Erin, random error (which UIs are about) comes from variability in the data, conditional on whatever process determined treatment and sample membership.

Systematic error (bias) is a shift between the parameter your sample truly represents and the parameter you wish it represented — and UIs don’t address that at all.

You’ve got two situations:

Sample A (nonrandom treatment allocation)

Sample not randomly selected from any defined population. Treatment is assigned by the institution’s usual, potentially biased, rules. The difference in outcomes between treatment groups within this sample is a perfectly valid random variable.

Sample B (random treatment allocation)

Same size, same male/female split. Treatments assigned randomly. The difference in outcomes now has a UI that estimates the mean difference under random assignment, which coincides with the causal contrast within this population. You can model its sampling distribution and construct a UI — for the sample’s own “internal” parameter, i.e. the true mean difference in this specific source population under those assignment rules.

In both cases, the computation of the UI is valid mathematically for the parameter tied to that specific data-generating mechanism. What changes is what parameter the UI actually refers to: In the randomised case, it can be interpreted causally (given randomisation breaks confounding). In the nonrandom allocation case, it’s about the association in that particular institutional process — not necessarily the causal effect and not necessarily the same as the population effect from the randomised study.

Why this matters for “why observational studies have UIs”

The UI itself doesn’t require random sampling or random allocation to be mathematically correct — it only needs the model for the estimator’s variability to be correct. Random sampling/allocation matter only if you want the UI to describe the same target parameter you’d care about in a broader population or under causal interpretation. Observational studies often silently assume the sample’s parameter approximates some wider target, which is where the bias vs. variance confusion creeps in.

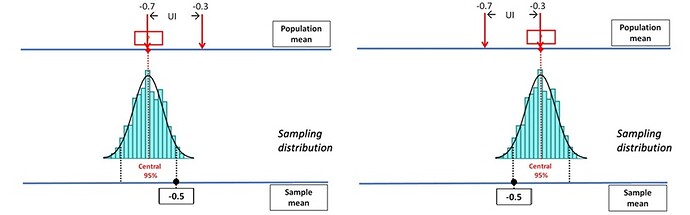

In an observational study, you can always define a perfectly valid statistical parameter for your dataset — for example: “The mean difference in outcome between treatment and control groups in this particular set of patients under the actual allocation rules used here.” Your confidence interval is then just about random error in estimating that parameter. No problem so far. But in practice, most observational studies aren’t really interested in just this particular sample or this institution’s idiosyncratic treatment rules. Instead, they talk and think as if they’re estimating a broader target — e.g.: “The effect in the general population” or “The causal effect if treatment were assigned at random” or “What would happen if policy changed everywhere”

Authors implicitly assume that the sample parameter (what the UI is truly about) is equal to or close to the target parameter they care about. This assumption is rarely stated or justified explicitly. If it’s wrong (because of confounding, selection bias, measurement error, etc.), the UI still gives you precise numbers — but they’re precise about the wrong thing. That’s why the bias–variance confusion creeps in: Variance is what the UI reflects — uncertainty due to random error. Bias is the systematic gap between the parameter your study truly identifies and the parameter you wish you were estimating. In observational studies, bias often dominates, but the UI only talks about variance — so a “narrow” UI can give a false sense of accuracy if you silently assume the bias is zero.

In summary:

The UI in an observational study is usually fine mathematically — but if you interpret it as if it were about a different target parameter without checking the assumption that the two are equal, you’re importing bias into your conclusion without noticing. That is why observational studies take pains to eliminate bias (DAGs etc) before estimating the UI.