Thank you Professor, I appreciate the feedback.

I just want to make sure I got this right…

The REV approach is much more straightforward, but is the other approach wrong (Question 1)?

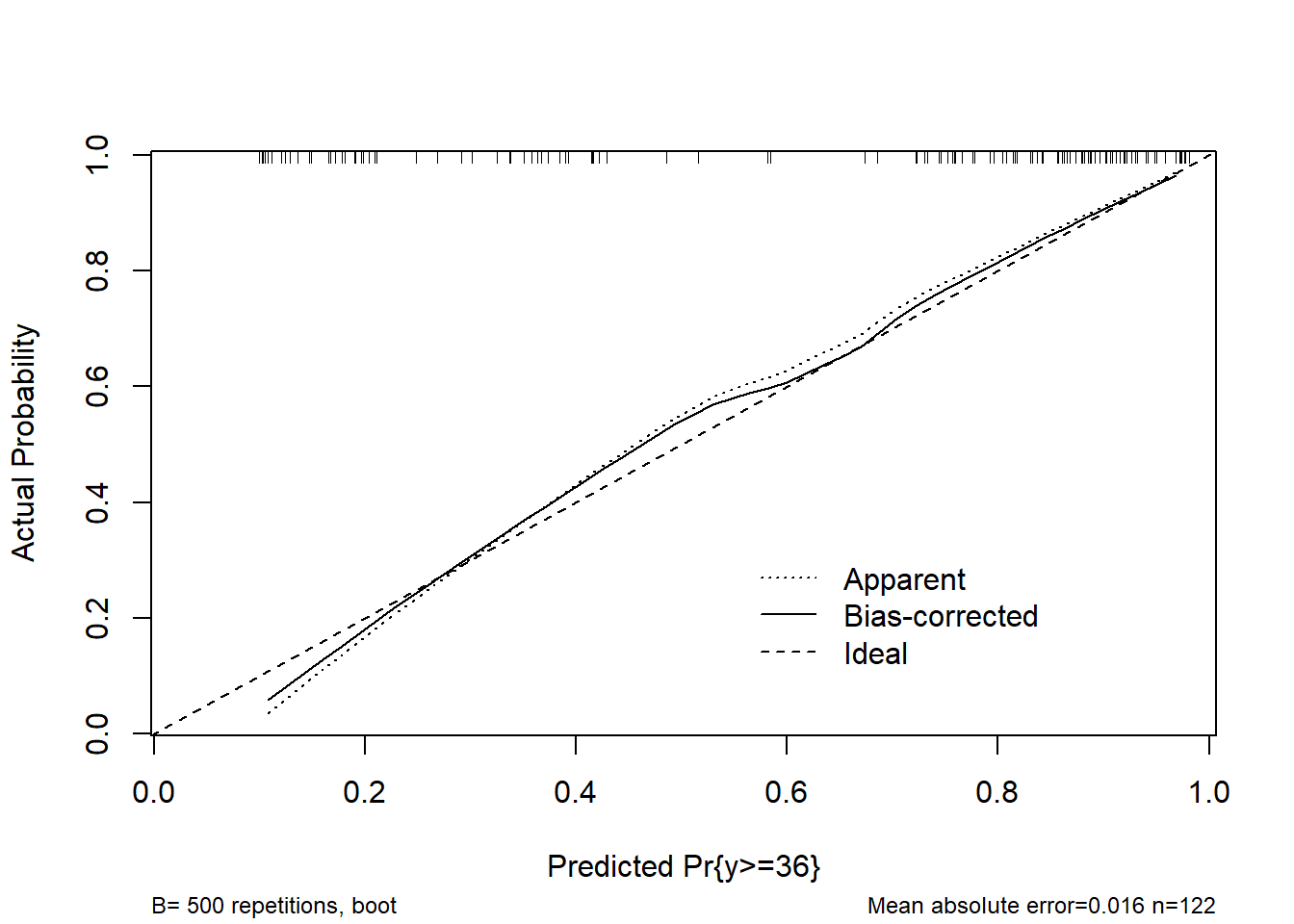

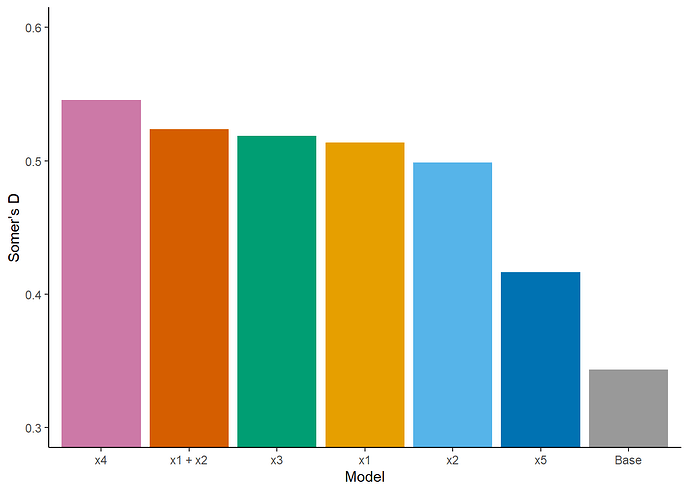

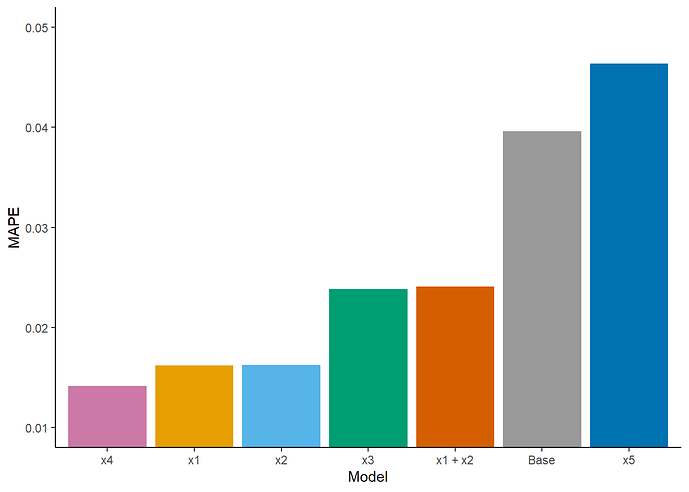

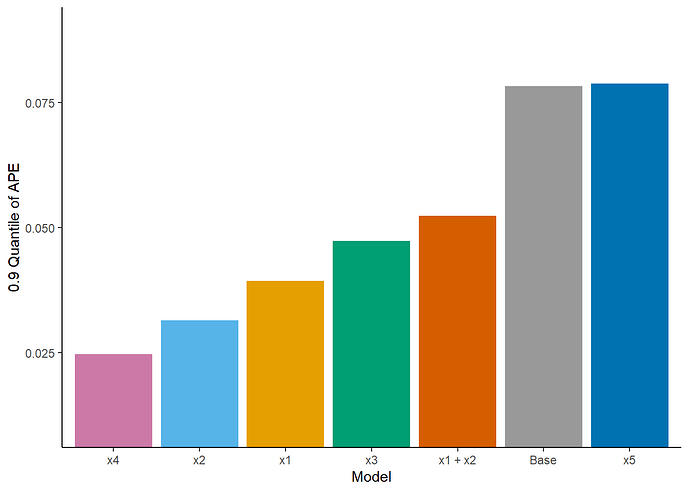

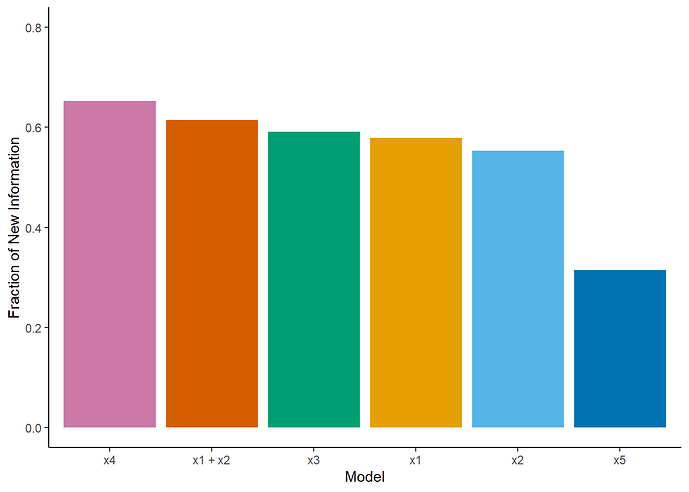

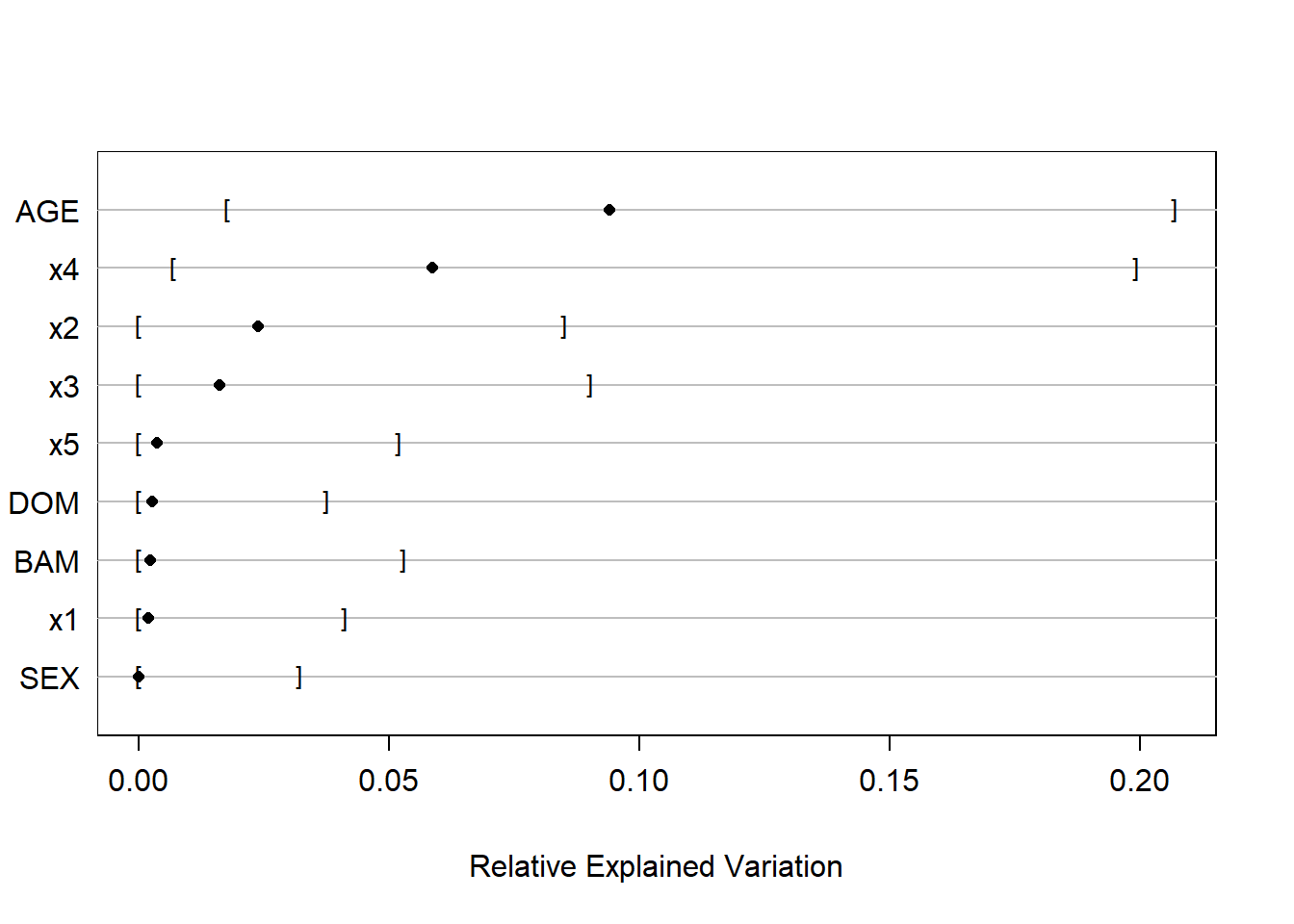

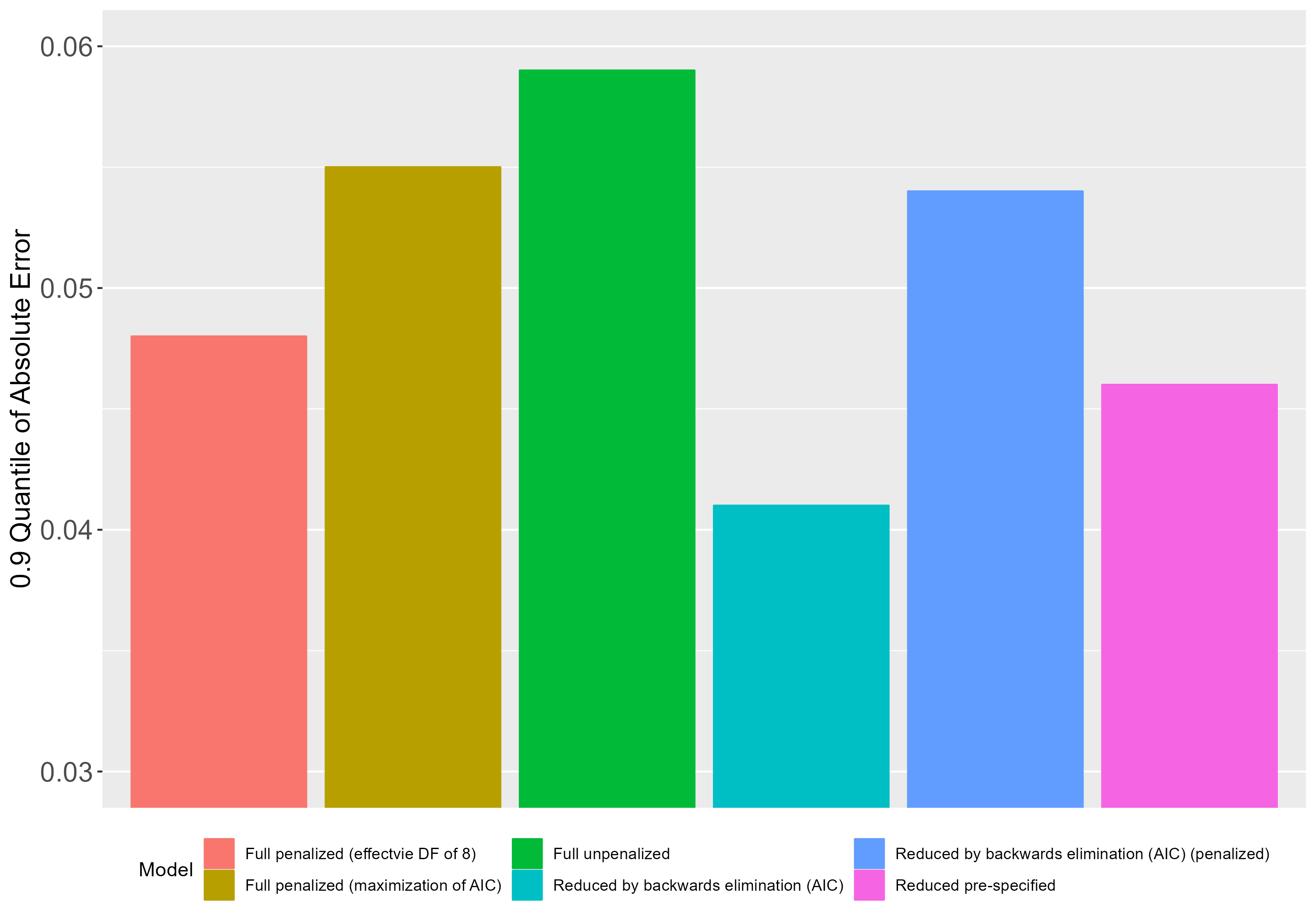

By other approach I mean when I fit a base/ reference model and a separate model for each new predictor (i.e., base model + new predictor) and then compare these models in terms of their predicitve performance, using discrimination, calibration and prediction error indexes, e.g., D_{xy}, E_{max}, Mean Absolute Prediction Error, etc.

If this approach is correct, then what would be the most appropriate index for choosing the best model (Question 2)? The reason I ask is because sometimes these indexes are in disagreement, e.g., for index a, model w is the best, but for index b, model z is the best.

Section 4.10 from RMS contains a list of criteria for comparing models. If I undestood correctly, calibration seems to be the most important criterion if the goal is to obtain accurate probabilities for future patients, whereas discrimination is more relevant if we want to accurately predict mean y.

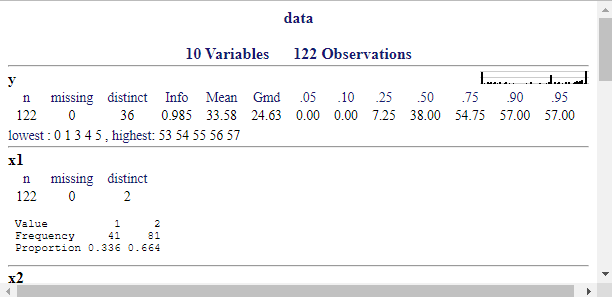

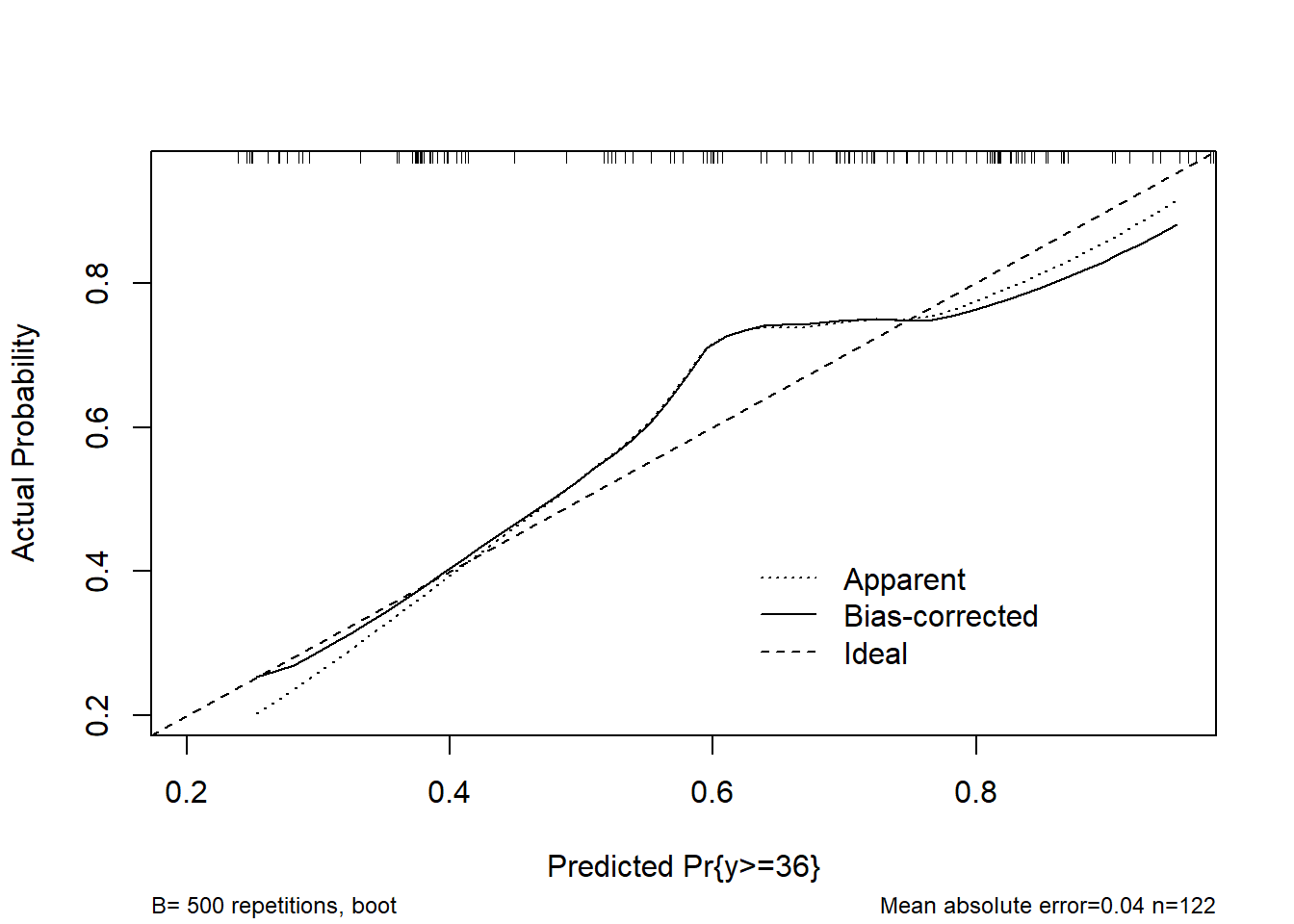

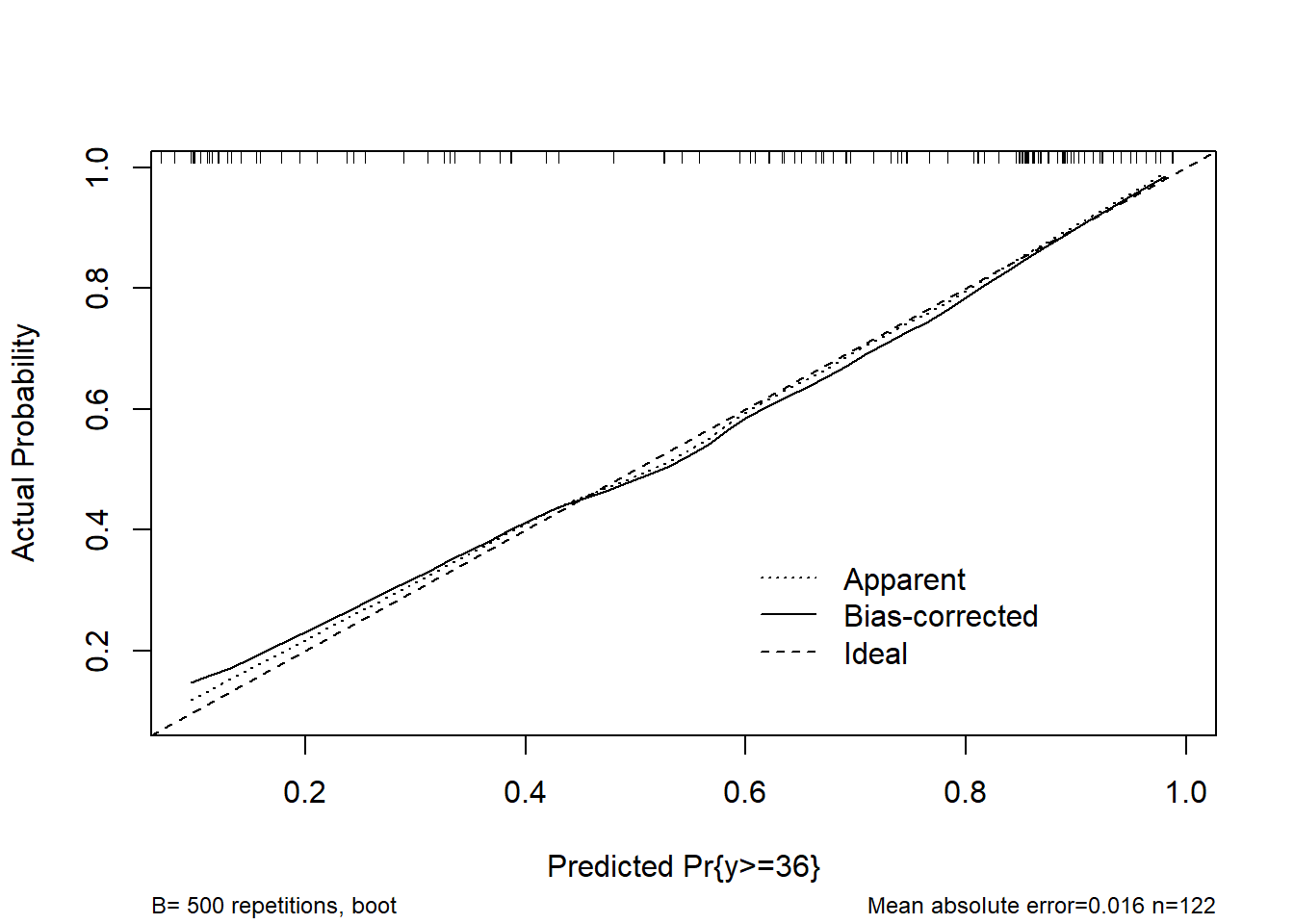

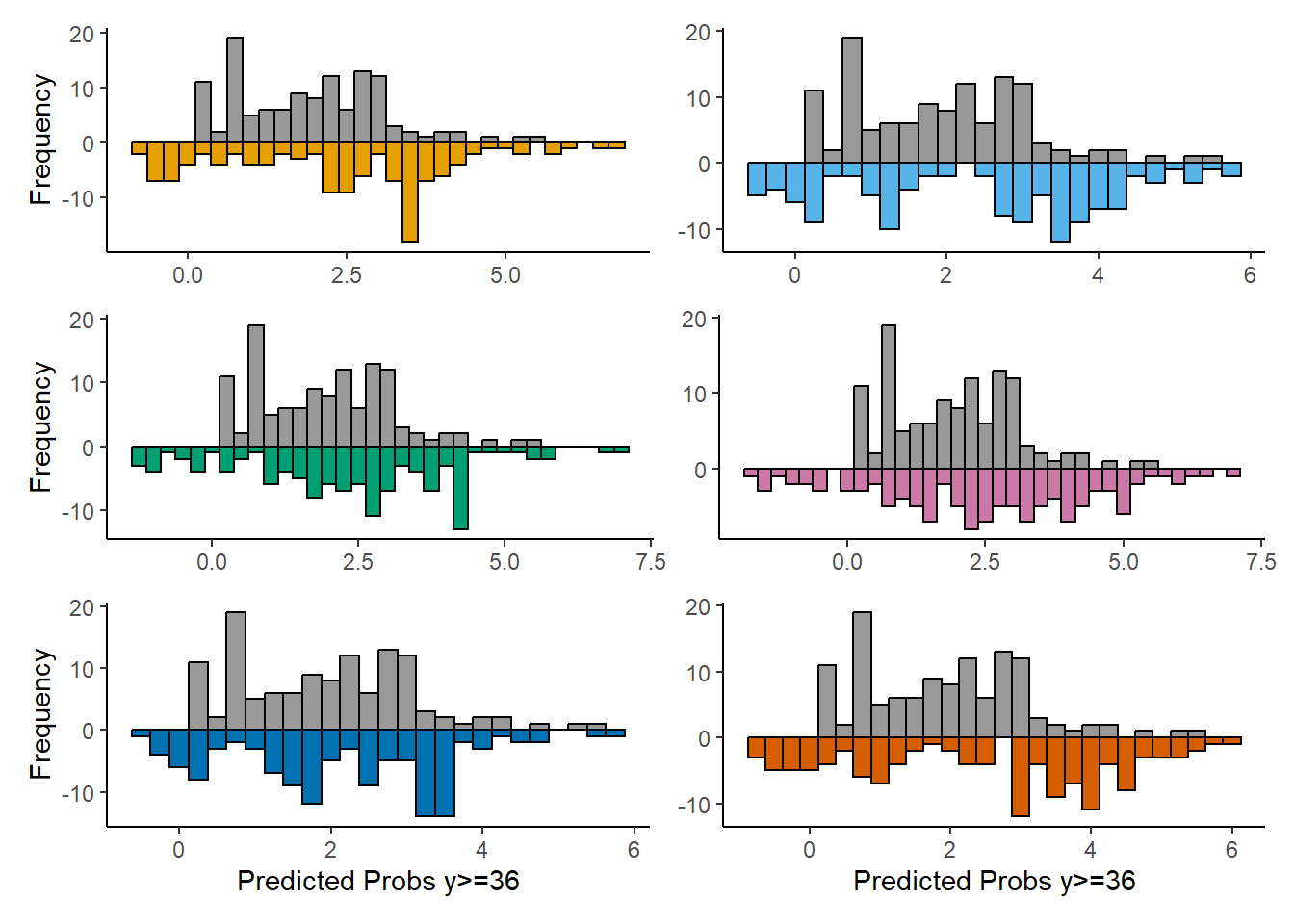

This matters to me beacuse in my case (PO models fitted with lrm) y has 35 intercepts and all the bootstrap validations and calibrations are performed using the middle intercept, but from a clinical perspective I think it is more relevant to predict mean y. Does that mean I should focus on discrimination (Question 3)?

Finally, considering the recommendations on section 13.4.5 from RMS, when describing my selected best model, besides presenting partial effects plots and a nomogram, both on the mean scale, what else could I do (Question 4)? I think it could also be relevant to report predictions (on the mean scale and with confidence limits) for new subjects using expand.grid and predict.lrm. But I’m having trouble with the arguments of Mean.lrm:

new <- expand.grid()

m <- Mean(f, codes = T, conf.int = T) # what about the design matrix ‘X’ (Question 5)?

lp <- predict(f, new)

m(lp)

Thank you for your time Professor.