I found a number of excellent videos on this dispute about causal inference that should be of interest.

Speaker bios

The presenters include: Larry Wasserman giving the frequentist POV, Philip Dawid, and Finnian Lattimore giving the Bayesian one.

The ones I’ve made it through so far:

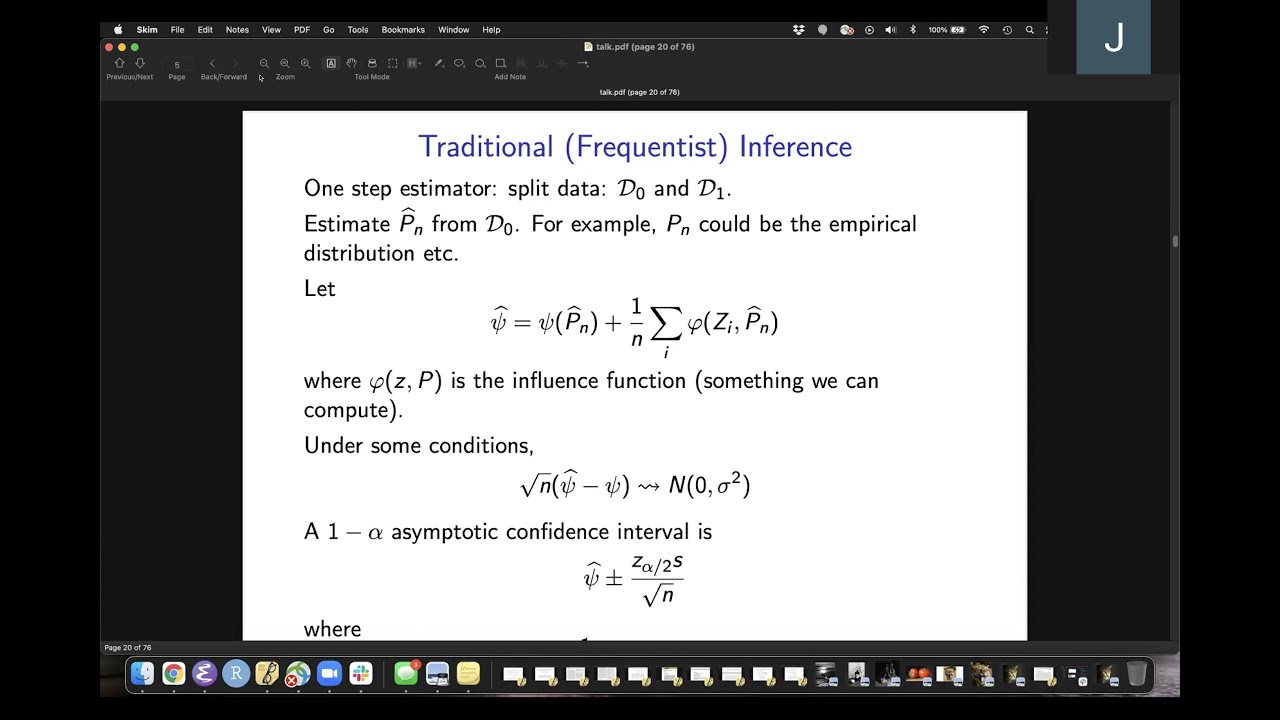

Larry Wasserman - Problems With Bayesian Causal Inference

His main complaint is that Bayesian credible intervals don’t necessarily have frequentist coverage. I’d simply point out that from Geisser’s perspective: in large areas of application, there is no parameter. Parameters are useful fictions. This is evident in extracting risk neutral implied densities from options prices (ie. in a bankruptcy or M&A scenario). But he discusses how causal inference questions are more challenging from a computational POV than the more simple versions seen assessment of interventions.

Philip Dawid - Causal Inference Is Just Bayesian Decision Theory

This is pretty much my intuition on the issue, but Dawid shows how causal inference problems are merely an instance of the more general Bayesian process and equivalent to Pearl’s DAGs.with the assumption of vast amounts of data.

Finnian Lattimore - Causal Inference with Bayes Rule

Excellent discussion on how to do Bayesian (Causal) inference with finite data. I’m very sympathetic to her POV that causal inference isn’t all that different from “statistical” inference, but one participant (Carlos Cinelli) kept insisting there is some distinction between “causal inference” and decision theory applications. They seem to insist that modelling the relation among probability distributions is meta to the “statistical inference” process, while that step seems naturally part of it to a Bayesian.

Panel Discussion: Does causality mean we need to go beyond Bayesian decision theory?

The key segment is the exchange between Lattimore and a Carlos Cinelli from 19:00 mark to 24:10

From 19:00 to 22:46, Carlos made the claim that “causal inference” is deducing the functional relation of variables in the model. Statistical inference for him is simply an inductive procedure for choosing the most likely distribution.from a sample. He calls the deductive process “logical” and in an effort to make Bayesian inference appear absurd, he concludes that “If logical deduction is Bayesian, everything is Bayesian inference.”

From 22:46 to 23:36 Lattimore describes the Bayesian approach as a general procedure for learning, and uses Planck’s constant as an example. In her description, you “define a model linking observations to the Planck’s constant that’s going to be some physics model” using known physical laws.

Cinelli objected to characterizing the use of physical laws to specify variable constrains when asking:

Cinelli: Do you consider the physics part, as part of statistics?

Lattimore: Yes. It is modelling.

Cinelli: (Thinking he is scoring a debate point) But it is a physical model, not a statistical model…

Lattimore: (Laughing at the absurdity) It is a physical model with random variables. It is a statistical model.

Lattimore effectively refuted the Perlian distinction between “statistical inference” and “causal inference.” Does “statistical mechanics” cease to be part of physics because of the use of “statistical” models?

The Perlian CGM school of thought essentially cannot conceive of the use of informative Bayesian inference, which physicists RT Cox and ET Jaynes advocated.

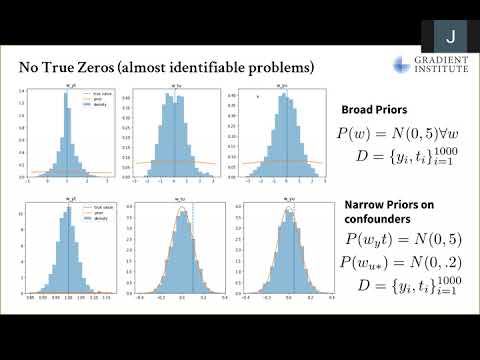

Another talk by David Rohde with the same title of the paper mentioned above. This was from March 2022.

David Rohde - Causal Inference is Inference – A beautifully simple idea that not everyone accepts

There are still interesting questions and conjectures I got from these talks:

- Why do frequentist procedures, which ignore information in this context, do so well?

- How might a Bayesian use frequentist methods when the obvious Bayesian mechanism is not

computable? - How does the Approximate Bayesian Computation program (ABC) relate to frequentist methods?

This paper by Donald Rubin sparked research into avoiding the need for intensive likelihood computations that characterize ABC.

A relatively recent primer on ABC